NPG Layout

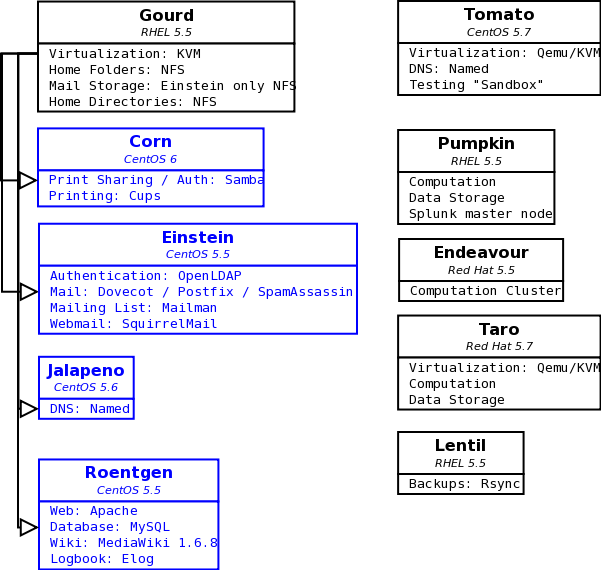

Current System Design

Systems and Services

Here is a diagram of our current system layout.

Black Systems represent physical hardware.

Blue Systems represent Virtual Machines

Arrows indicate which system a Virtual Machine is running on. Other hosts with VMWare capability can be used to fill in for the main Virtualization server during downtime.

Operating System is listed just beneath each system's name

Services are listed in the second row of the diagram, followed by the specific software used to provide that service.

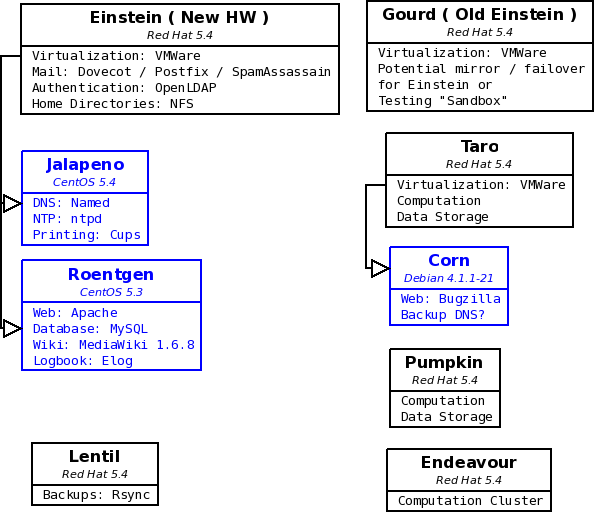

System Redesign

This area features the current working diagrams for the proposed system redesign that will occur with the replacement of the current Einstein hardware. Working copies of the Dia files for these diagrams are located in my Public folder ( /net/home/aduston/Public/Diagrams ) if they need to be changed or updated.

Systems and Services

First Revised design

This updated diagram incorporates Maurik's suggested changes:

Questions / Concerns

This design attempts to minimize the number of virtual machines which frees up a couple of host names. I made Corn a backup DNS server that would run on Taro because this is the best way to failover in case something happens with Jalapeno. Even if Einstein goes down entirely we'll at least have one DNS server still running.

Corn is running Debian and is designed to be a standalone Bugzilla appliance. It is not known whether this will make it difficult to add the DNS functionality ( it should be as simple as installing the service and copying the configuration from Jalapeno ). This needs to be investigated. An alternative is leaving Corn as a standalone and using the Okra virtual machine to provide printing as well as acting as a backup DNS.

It is not known yet whether the old Einstein will be usable as a mirror / failover for the new Einstein. This should be investigated. If it is, we should also investigate whether it will need a Red Hat license, or if CentOS will be sufficient.

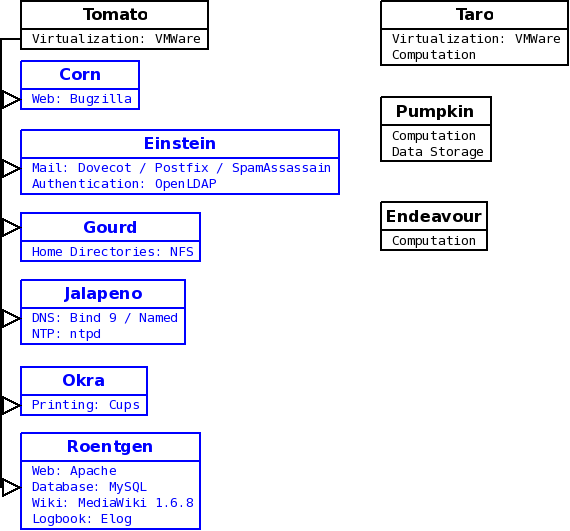

Initial Proposed Design

This design is deprecated, but left here for reference purposes.

New Einstein

Disks and Raid Configuration

Current Disk usage Estimates for Einstein:

| Mail (/var/spool): | approx. 30GB |

| Home Folders (/home): | approx. 122GB |

| Virtual Machines (/data/VMWare on Taro): | approx. 70GB |

| LDAP Database (/var/lib/ldap): | approx. 91MB |

Disk sizes in the following tables are based roughly on these current usage estimates with plenty of extra space to grow. They can be adjusted as appropriate to better suit our needs, and to make these designs more cost effective.

Proposed Configuration 1

This configuration is designed to modularize storage and keep related data on separate mirrors. Could be useful if we have a failover system, such as the old Einstein hardware, because individual components ( Mail, home folders, etc ) could be relocated physically to another machine in the event of some failure rather than copying large amounts of data over the network. This design could be modified to use fewer drives by storing Virtual machines either on the /var array or the /home array, opening up the bays to store spare drives.

| Drive Bay | Raid Type | Contents | Volume Size | Disk Size |

|---|---|---|---|---|

| 1 | Raid 1 | Operating System ( / ) | 250 GB | 250 GB |

| 2 | 250 GB | |||

| 3 | Raid 1 | Mail/LDAP ( /var ) | 250 GB | 250 GB |

| 4 | 250 GB | |||

| 5 | Raid 1 | Home Folders | 500 GB | 500 GB |

| 6 | 500 GB | |||

| 7 | Raid 1 | Virtual Machines Data |

250 GB | 250 GB |

| 8 | 250 GB |

Proposed Configuration 2

This configuration provides a larger amount of contiguous storage space than the previous design with redundancy provided by either a Raid 6 or Raid 5 array. Raid 5 would provide more usable storage, but Raid 6 will withstand more than one disk failure. It may be preferable to err on the side of caution with our user's data and use a Raid 6 for home folders.

| Drive Bay | Raid Type | Contents | Volume Size | Disk Size |

|---|---|---|---|---|

| 1 | Raid 1 | Operating System ( / ) | 250 GB | 250 GB |

| 2 | 250 GB | |||

| 3 | Raid 1 | Mail/LDAP ( /var ) | 250 GB | 250 GB |

| 4 | 250 GB | |||

| 5 | Raid 5 or Raid 6 |

Home Folders Virtual Machines Other data |

1000 GB (Raid5) 1500GB(Raid6) |

500 GB |

| 6 | 500 GB | |||

| 7 | 500 GB | |||

| 8 | 500 GB |

Proposed Configuration 3

This configuration would create one large data store for all user and system data, which could be stored on separate appropriately sized partitions. This design is less modular, but uses fewer drives than previous designs while leaving bays open to store spares in the event of a drive failure.

| Drive Bay | Raid Type | Contents | Volume Size | Disk Size |

|---|---|---|---|---|

| 1 | Raid 1 | Operating System ( / ) | 250 GB | 250 GB |

| 2 | 250GB | |||

| 3 | Raid 6 | Home Folders ( /home ) Mail/LDAP ( /var ) Virtual Machines |

1000 GB | 500 GB |

| 4 | 500 GB | |||

| 5 | 500 GB | |||

| 6 | 500 GB | |||

| 7 | None | None/Spare Drive | 0 | 250/500 |

| 8 | None | None/Spare Drive | 0 | 250/500 |